AI Is Saving Time — So Why Are Operating Models Still So Broken?

Thoughts on Workday’s New AI Report

If you were following the news from Davos last month, you know AI was the number one thought on just about everyone’s mind.

Everywhere you turned, leaders were talking about productivity gains, time saved, and the promise of doing more with less. And according to new global research from Workday, that optimism isn’t misplaced. Most employees are already saving meaningful time each week by using AI at work.

And yet, something isn’t adding up.

If AI is delivering on speed, why do so many teams still feel overloaded? Why does work feel just as heavy—or even heavier—than before? And why are organizations struggling to point to tangible business outcomes that match all that saved time?

It might be tempting to blame AI for not delivering on its hype, but the reality is not that AI isn’t working. It’s that most HR operating models haven’t caught up.

The productivity paradox hiding in plain sight

Workday’s research surfaces a contradiction many leaders sense but haven’t fully named yet. On paper, AI is a productivity win:

- The vast majority of employees say AI makes them more productive

- Most report saving between one and seven hours per week

- Adoption is already widespread, especially among younger and mid-career professionals

But when you look more closely, nearly 40% of that saved time is quietly lost, spent fixing errors, rewriting content, verifying sources, and double-checking AI outputs. Only a small minority of employees consistently experience clear, net-positive outcomes from AI use.

In other words: AI is speeding work up, but it’s not moving it forward. This is the AI productivity paradox. And it’s why many organizations feel stuck between enthusiasm and frustration.

We spoke about this in our recent Rival-sponsored HRCI webinar with Mercer, Building the Connected HR Ecosystem Behind Today’s Most Effective People Teams, where Tara Cooper from Mercer, Maureen Mondesir, Founder of Viviane Care Group, and Rival’s Chief Product Officer Poornima Farrar made clear that increasing AI usage without changing how work is designed just magnifies the problem. The research shows that organizations investing primarily in technology, without reinvesting in skills, role clarity, and operating norms, are far more likely to see AI gains evaporate into rework.

Speed went up. Accountability didn’t change.

One of the most revealing findings in the Workday research isn’t about the technology at all, but about who carries the burden.

The employees using AI most frequently are often the ones doing the most rework. They’re optimistic about AI’s potential, but they’re also spending significant time auditing its outputs with the same (or greater) rigor they apply to human work.

That makes sense when you consider the nature of the work. In areas like HR, operations, finance, and communications, “mostly right” isn’t good enough. Tone matters. Accuracy matters. Compliance matters. And when AI output falls short, that means someone has to fix it. So instead of getting time back, employees inherit a new layer of invisible work: verification, correction, and risk management.

The real issue: AI layered onto outdated work design

It’s tempting to frame this as a tech problem, prompt-writing problem, or even a maturity problem, but the research points to something more structural.

In most organizations, roles haven’t been meaningfully updated to reflect AI-enabled work. Advanced tools are being layered onto job designs that were created long before AI entered the picture. Expectations around quality, ownership, and decision-making remain unchanged, even as the pace of output accelerates.

Employees are asked to move faster, but, frustratingly, are still measured the same way. They’re encouraged to use AI, but are left to figure out how much judgment to apply, when to trust the outputs, and where accountability ultimately sits. When things go wrong, the blame game starts.

“More AI” can’t fix this

AI is an incredible tool, but to work, it must sit on a viable infrastructure. As they deploy this new technology in existing processes and operations, organizations can see something is off, but most often they respond by doubling down on more tech: more tools, more use cases, more encouragement to experiment.

This is only throwing good money after bad. In reality, increasing AI usage without changing how work is designed just magnifies the problem. The research shows that organizations investing primarily in technology—without reinvesting in skills, role clarity, and operating norms—are far more likely to see AI gains evaporate into rework.

This is why the AI conversation is starting to shift. The question leaders are now asking isn’t “How much time does AI save?” It’s “Why doesn’t that time seem to turn into better outcomes?”

That’s where operating models enter the picture, and where the real opportunity begins.

What actually changes when operating

models catch up

When organizations do start turning AI time savings into real outcomes, the shift isn’t subtle and it isn’t accidental.

The companies seeing returns aren’t just deploying AI more aggressively. They’re changing how work is designed, how decisions are made, and how accountability flows across the organization. They’re treating AI as a catalyst for modernizing the system around the work, not a shortcut layered onto it.

Workday’s research reinforces this point. Employees who consistently experience positive outcomes from AI are far more likely to work in environments where:

- Roles have been updated to reflect AI-enabled work

- Expectations for quality and judgment are explicit

- Time saved is intentionally reinvested, not silently reallocated

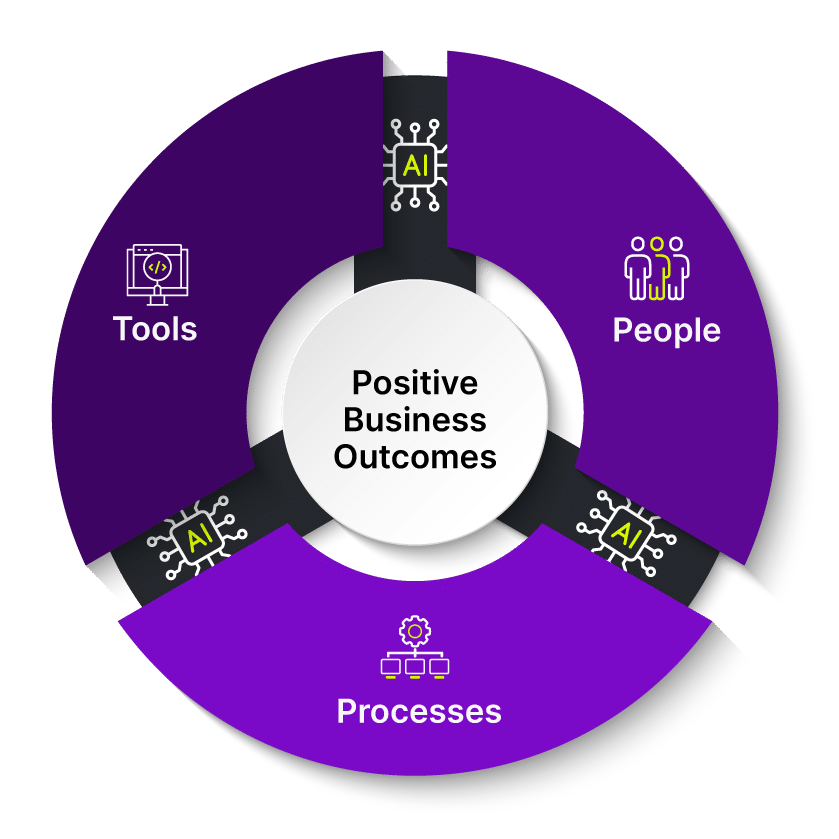

In other words, AI works best when it’s embedded inside a coherent operating model — one that aligns tools, people, and processes around outcomes, not activity.

The missing link: knowing how work actually moves

This is where many HR teams get stuck. They invest in AI, but the surrounding ecosystem remains fragmented. Recruiting, onboarding, learning, performance, and workforce planning all operate on slightly different assumptions, with different data, different workflows, and different definitions of “success.”

So even when AI accelerates individual tasks, the system as a whole doesn’t move any faster. Bottlenecks shift instead of disappearing. Hand-offs still require human intervention. Judgment still lives in inboxes and meetings, not in the design of the work itself.

Without a connected operating model, AI simply can’t reduce complexity. But it sure does expose it.

From productivity to progress

So what do you do about it? The most effective organizations make a clear pivot: they stop measuring success by time saved and start measuring it by outcomes.

That means asking different questions:

- Did AI improve the quality of decisions?

- Did it reduce rework downstream?

- Did it make collaboration easier or harder?

- Did it free people to do higher-value work, or just more work?

This shift sounds simple, but it requires real changes underneath. Job expectations need to evolve, workflows need to be redesigned, and teams need shared clarity about where AI supports the work and where human judgment still matters most.

This is the unglamorous work of operating model change. And it’s where AI value is either realized or lost.

The temptation right now is to treat this phase of AI adoption as temporary growing pains, something that will resolve itself as tools improve. But the data suggests otherwise. Without intentional changes to how work is designed and supported, organizations risk locking in a new kind of inefficiency: faster output paired with higher cognitive load, invisible rework, and creeping burnout among their most capable employees.

The opportunity ahead

AI may be saving time. But, as we observed in our recent HRCI webinar, only operating model change turns that time into value.

The organizations that most successfully use AI will be the ones that use this moment to modernize how work actually gets done. They are the organizations that are aligning technology with roles, workflows, and outcomes in a way that’s sustainable.

Rival Workflow is designed to sit underneath the work, helping HR teams orchestrate processes across recruiting, onboarding, learning, performance, and employee support. It creates shared clarity around who does what, when, and why, so AI-enabled work doesn’t stall in handoffs, inboxes, or rework loops.

When workflows are clear and connected:

- AI outputs move forward instead of circling back

- Accountability is explicit, not assumed

- Time saved can be reinvested

From insight to execution

The takeaway from Workday’s research is clear: AI alone doesn’t fix broken operating models, but it does make fixing them absolutely unavoidable.

The opportunity now is to use this moment to rethink how work is designed, supported, and connected across the HR ecosystem. That’s where durable value lives. And it’s where teams can finally turn AI speed into better outcomes — for the business and for the people doing the work.

Watch our HRCI webinar, Building the Connected HR Ecosystem Behind Today’s Most Effective People Teams, on demand to continue the conversation and see how leading HR teams are approaching this shift.